I am quiet fascinated with the potential of the deep dream generation processes, brought to light by google researchers trying to explain their artificially intelligent process to recognise objects in photos (unfortunately that had started with discovering pictures of cats on social media, I have been know to unfollow/unfriend people who over post cat photos). To demonstrate the way their neural networks work they showed the state of the neural network as it tried to recognize characteristic shapes, outlines, colours & textures/patterns with a given image. As you go deeper and deeper into the layers of the neural network you get wonderfully focused images that eventually become very surreal (trippy) and dream like. Hence the name of the project Deep dream.

There are many competing system around now and I probably still prefer Dreamscope on the web and Lucid on my android phone. The best variation of the deep dream approach also allow you to train your own photos or artwork as the neural network to rank your intended photo. There is a fair amount of computing involved in training these neural networks but google or the other services handles this by spreading the task across its cloud of servers, but it does take time. The deeper you want to go and the larger the output required the longer it takes (google seems to abandon the task after 15 minutes, not sure why yet).

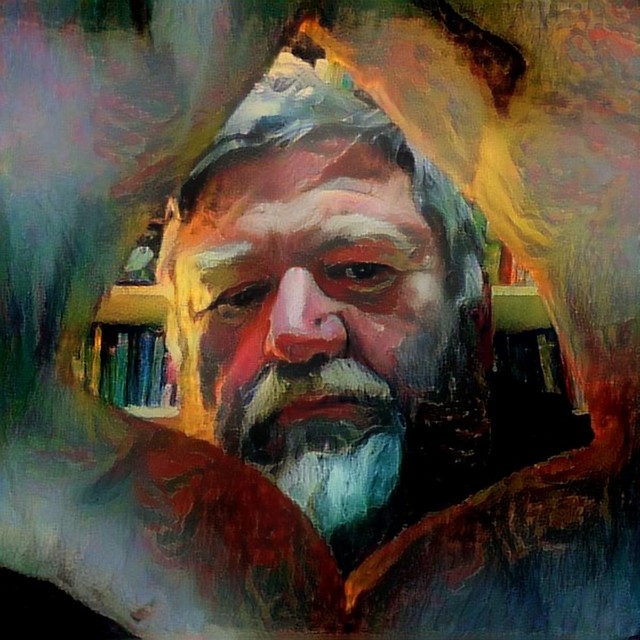

I then feed a square cropped version of my self portrait into another deep style but this time I used the interim photo/sketch as the image to train a new neraul network upon. This is why I am calling this the third generation (1. original photo 2. pastel like photo/sketch 3. neural network filter).

The result was indeed a good combination of the colours and feel of the original photo, along with the style of marks you might get from pastels and yet I was still recognizable peering between my hands forming a diamond shape frame.

I like where this approach is going

No comments:

Post a Comment